Table of contents

TL;DR overview

What is software reliability?

The verification bottleneck: Why reliability is harder in 2026

What are the best practices for improving software reliability?

What are the core metrics to measure and improve system stability?

How SonarQube helps you achieve production-ready code reliability

Next steps for software reliability

Start your free trial

Verify all code. Find and fix issues faster with SonarQube.

시작하기Author: Sam Hecht

TL;DR overview

- Software reliability measures how consistently systems perform intended functions without failure, moving beyond uptime to encompass correctness, design, maintainability, and security.

- AI-driven development creates a verification bottleneck where rapid code generation leads to accumulated debt and "AI slop" without rigorous, automated analysis.

- Building production-ready systems requires integrating continuous scanning and verification to eliminate hidden defects.

- Organizations can ensure system stability by tracking change failure rates and using deterministic verification platforms to validate AI-generated output at scale.

Software reliability used to be about finding bugs after the code was completed but before the application reached production. Today, the challenge has shifted. As AI coding assistants and agents generate code at ten times human speed, we are facing a "code verification bottleneck."

If your team is generating thousands of lines of code daily but spending even more time reviewing and fixing them, you aren't just building software, you are accumulating verification debt. This article explores how to navigate this new landscape to ensure your software remains stable, secure, and production-ready.

What is software reliability?

Software reliability is the measure of how consistently a system performs its intended function without failure over time, under defined conditions. In modern engineering, it extends far beyond simply "working code." It encompasses correctness, stability, maintainability, and security, ensuring that applications behave predictably across environments, workloads, and edge cases.

Defining software reliability for modern engineering

In its simplest form, software reliability is the probability that a system will perform its intended function without failure for a specific period of time. It is not just about whether the code runs; it is about whether the code behaves predictably under stress.

Reliability is a long-term measure of trust. While speed-to-market is the goal for many organizations, shipping unreliable code leads to a cycle of rework and "toil" that eventually grinds innovation to a halt.

Reliability vs. availability: Knowing the difference

Many teams use these terms interchangeably, but they represent different goals. Availability is about "uptime"—is the system up and running when the user needs it? A website can be available but still be unreliable if certain features frequently fail or produce incorrect results.

Reliability focuses on consistency and correctness. A system might have 99.9% availability but low reliability if it silently corrupts data or has hard-to-find vulnerabilities. To build high-quality software, you must optimize for both.

The verification bottleneck: Why reliability is harder in 2026

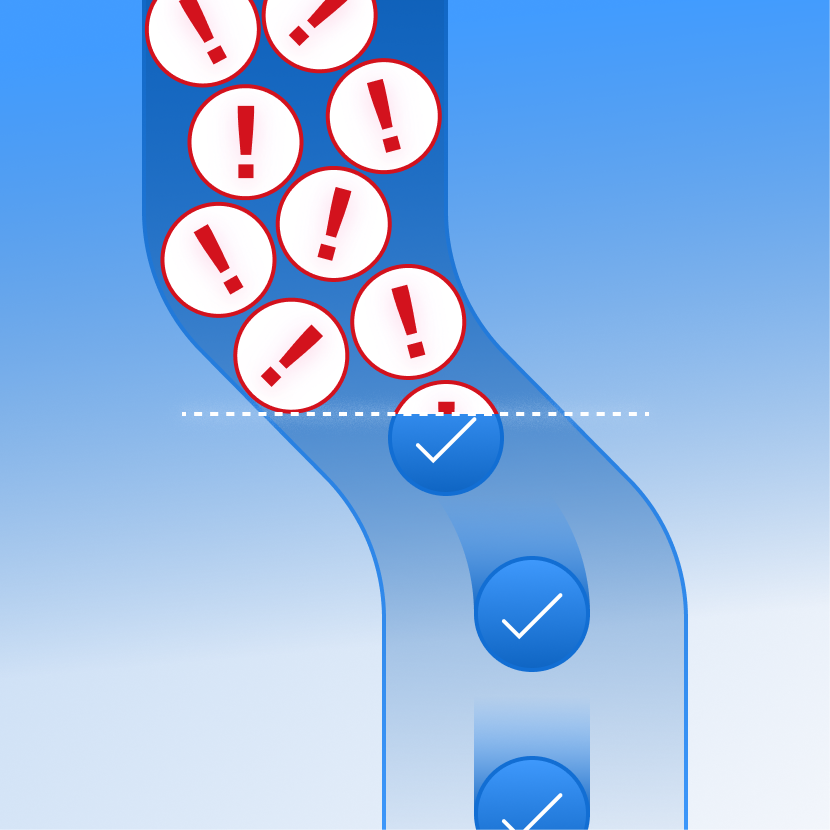

The rapid adoption of AI agents and coding assistants has fundamentally changed the software development lifecycle. While these tools can generate code at an unprecedented volume, they have introduced a massive "verification bottleneck." The speed of generation has far outpaced the human capacity for manual review, creating a growing gap in trust and system stability.

This reliability challenge exists because AI-generated code often suffers from "hallucinated" logic—producing snippets that appear syntactically correct but fail in production or reference non-existent library functions. These subtle logical errors and security vulnerabilities are significantly harder to identify during a standard review than traditional human mistakes. Without a rigorous, automated verification layer, this influx of code leads to "verification debt," where the cost of validating and fixing AI output eventually offsets the initial productivity gains. Sonar data reveals this critical verification gap in AI coding is becoming a widespread industry concern.

Addressing the rise of "AI slop" and code bloat

Research shows that the most capable AI models often write the most verbose code, creating a larger surface area for bugs. This "AI slop" compounds technical debt and erodes the architectural integrity of the codebase over time. Understanding why Claude Opus 4 6 requires verification illustrates how even advanced models need rigorous oversight.

To maintain reliability, teams must move away from blind trust. The new paradigm requires a "vibe, then verify" approach: using AI for creative generation (the vibe) but relying on rigorous, deterministic analysis to ensure the output meets organizational standards (the verify). Addressing the AI coding trust gap is essential for sustainable development practices.

What are the best practices for improving software reliability?

Enforce coding standards and continuous code cleanup

Consistent coding standards, enforced through automated code reviews and static analysis, ensure readability, maintainability, and fewer defects across programming languages. Ongoing code cleanup prevents small issues from compounding into larger reliability risks.

Prioritize regular code refactoring

Frequent code refactoring reduces complexity, improves structure, and limits the growth of technical debt—especially critical as AI-generated code increases volume. Simpler, well-structured code is inherently easier to verify and more reliable over time.

Integrate continuous vulnerability scanning

Embedding vulnerability scanning into CI/CD pipelines helps detect security flaws early, reinforcing both application security and system reliability. Applying secure coding practices ensures that failures caused by exploitable weaknesses are minimized.

Build a comprehensive testing strategy

A layered testing approach, combining unit, integration, and fuzz testing, strengthens software verification by validating both expected behavior and edge cases. This ensures systems remain stable under real-world and unpredictable conditions.

Establish observability and feedback loops

Robust observability through logs, metrics, and tracing enables teams to quickly detect and resolve failures, improving Mean time to recovery (MTTR) and overall software quality. Feeding these insights back into development strengthens future code and review processes.

Implement AI governance and verification gates

All AI-generated code should pass through AI code review and policy-based validation before being merged to prevent introducing hidden defects or vulnerabilities. This governance layer ensures AI accelerates development without compromising reliability or security. For detailed implementation guidance, refer to the how-to guide for AI code assurance.

What are the core metrics to measure and improve system stability?

You cannot improve what you do not measure. In an agentic centric world, traditional metrics like "lines of code" are irrelevant. Leaders should instead focus on outcome-focused signals that reflect the actual health of the engineering system.

Beyond uptime: Tracking change failure rates and defect density

Elite engineering teams prioritize stability alongside speed. Key metrics to track include:

- Change failure rate: The percentage of deployments that trigger a production failure.

- Defect density: The number of bugs per unit of codebase. As AI generates more code, confirming that bug density is not rising is critical.

- Verification debt: The volume of unvalidated code moving toward production.

- Mean time to recovery (MTTR): How fast your team can fix the system when it fails.

How SonarQube helps you achieve production-ready code reliability

SonarQube is the industry-leading code trust and verification platform necessary to solve the AI accountability crisis and eliminate verification debt. By using deterministic mathematical reasoning, SonarQube delivers fast, transparent, and repeatable results that identify reliability, maintainability, and security issues across over 40 programming languages. This ensures that your code is not just functionally correct but is production-ready and built on a foundation of quality.

SonarQube integrates directly into your existing development workflow. This enables developers and platform engineering teams to maintain high standards and improve AI code quality and security as they write. By acting as an independent verification layer through automated code review, SonarQube empowers your team to adopt AI agents with confidence through AI code assurance, helping you build software you can truly trust.

Next steps for software reliability

To move forward, organizations must shift from late stage reactive debugging to proactive software verification embedded throughout the development lifecycle. This means integrating automated code reviews, vulnerability scanning, and enforceable secure coding standards directly into developer workflows, from IDE to CI/CD. Teams should prioritize reducing verification debt by ensuring every change, especially AI-generated code, is validated against consistent code quality and security criteria before it reaches production. Investing in continuous code cleanup and code refactoring will further help control technical debt, making systems easier to maintain and less prone to failure over time.

Equally important is building a culture of accountability and continuous improvement around software quality and application security. High-performing teams establish clear ownership of reliability metrics, strengthen feedback loops through observability, and treat every incident as an opportunity to improve their systems and processes. As AI becomes a permanent part of software development across programming languages, success will depend on combining its speed with deterministic validation. By adopting a "trust but verify" mindset and reinforcing it with automation, organizations can confidently scale development while maintaining resilient, production-ready systems.