Cleaner pull requests, first time

Routine AI mistakes are fixed before code reaches review. Reviewers spend their time on logic and architecture — not cleanup.

SonarQube Agentic Analysis verifies code written by AI agents against your team's quality and security standards while the AI is still writing. Bugs get fixed in seconds — not hours later in code review.

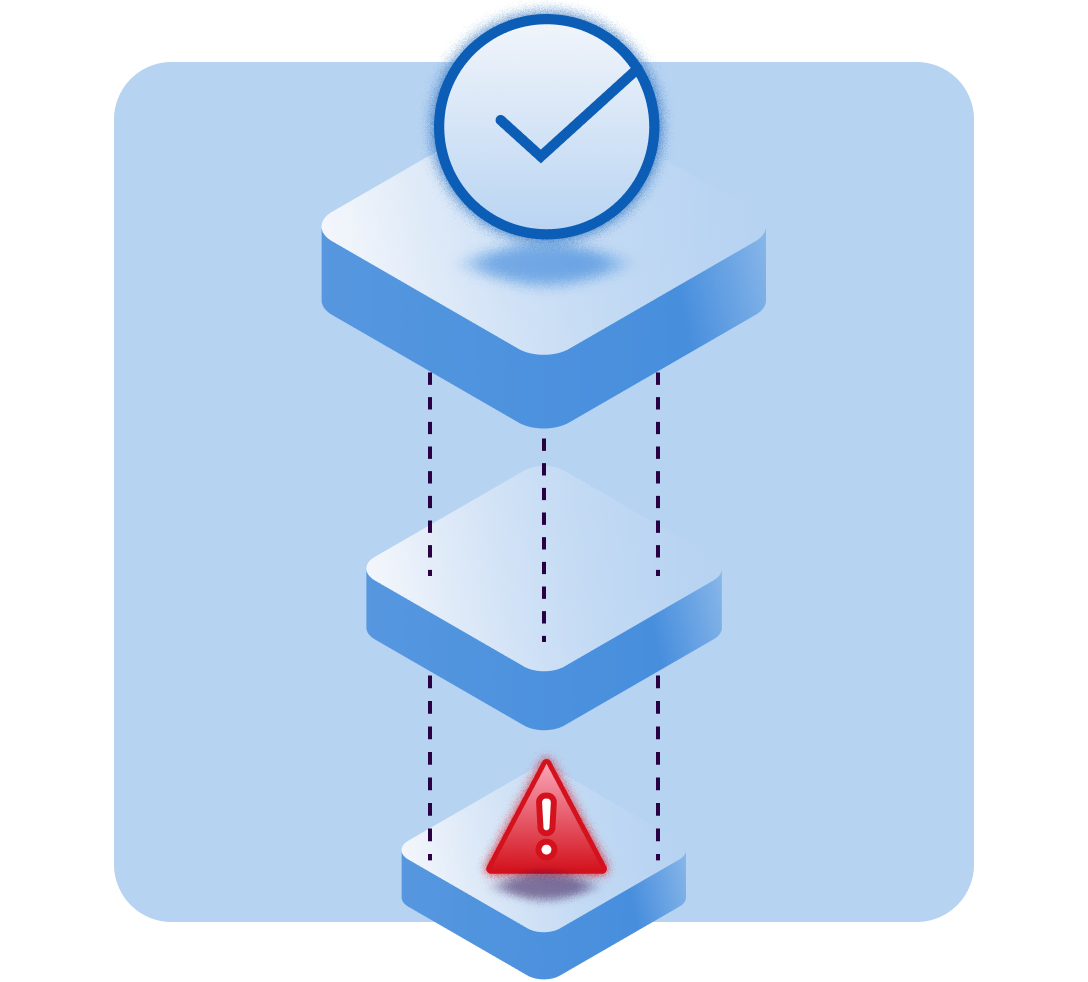

Basic local linter checks are fast, but too shallow. Pull request review and CI are trusted, but too late for teams to discover routine AI-generated issues. That creates avoidable rework, review churn, and lower confidence in AI-assisted development.

Basic code checkers only look at one file. They miss bugs that appear when different parts of your codebase interact.

By the time CI flags a problem, the developer has moved on. Switching back to fix it costs time, focus, and momentum.

Senior engineers and security teams waste review cycles catching routine AI mistakes instead of focusing on architecture and logic.

Without a shared standard, teams using multiple AI coding tools get inconsistent code quality across the same codebase.

Agentic Analysis applies the full depth of SonarQube's analysis — the same coding standards your teams trust in CI — directly inside the AI coding workflow.

Issues are caught and fixed during code generation — not hours later when a developer has to stop what they're doing to clean up.

Uses cached data from your previous CI builds to understand how your entire codebase connects. Catches cross-file bugs that single-file checkers miss.

No new rules to define. Agentic Analysis uses the quality profiles your team already enforces in SonarQube — across every AI tool on the team.

Checks for vulnerabilities, reliability issues, maintainability problems, and exposed secrets — not just security, and not just style.

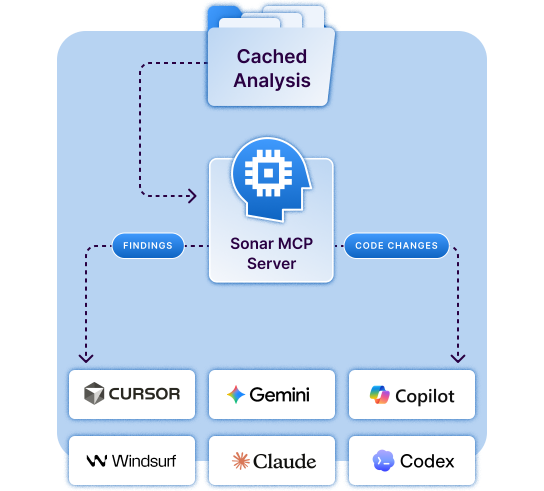

Connects through the SonarQube MCP Server to Cursor, GitHub Copilot, Claude Code, Windsurf, Gemini CLI, and any MCP-compatible workflow.

One verification standard for every AI coding tool on the team. No more inconsistent code quality when developers use different assistants.

Agentic Analysis works inside the AI's coding loop — before the developer sees anything or opens a pull request.

Sonar Context Augmentation gives the AI agent your project's quality rules and code context before it writes a line.

Your AI coding tool — Cursor, Copilot, Claude Code, or any MCP-compatible tool — generates code as it normally would.

Agentic Analysis automatically checks the code against your SonarQube quality profiles using full project context — in seconds.

The AI uses Sonar's specific, rule-based findings to fix its own mistakes and re-verify — before the developer ever sees the code.

Many meaningful issues cannot be detected from a single file alone. A change that looks correct in isolation may still depend on a deprecated API, unsafe usage pattern, missing type relationship, or broader project logic. Agentic Analysis uses SonarQube project context to make fast feedback accurate enough to trust.

Routine AI mistakes are fixed before code reaches review. Reviewers spend their time on logic and architecture — not cleanup.

Agentic Analysis extends the SonarQube verification teams already trust in CI into the moment AI-generated code is created, where issues are cheapest to catch and easiest to fix.

Built on the same Sonar analysis foundation teams already use to improve code quality and code security.

Bring SonarQube context, baseline, and standards into the agent loop instead of relying only on local heuristics.

Catch and correct routine AI-generated issues before they become reviewer cleanup, not after.

See how Agentic Analysis fits into your team's existing AI workflow — no new tools to learn, no new standards to define.

SonarQube Agentic Analysis is a real-time code verification service from Sonar. It connects AI coding tools — such as Cursor, GitHub Copilot, and Claude Code — to SonarQube's analysis engine through the Model Context Protocol (MCP). While the AI is writing code, it automatically checks its own output against your team's SonarQube quality and security standards, finds issues, fixes them, and re-checks — all before the developer opens a pull request.