TL;DR overview

- This post compares the performance of Anthropic's Claude model versions—Opus 4.5 and Sonnet 4.6—across coding and code quality tasks relevant to software development workflows.

- The analysis evaluates differences in code generation accuracy, instruction following, security vulnerability awareness, and maintainability of AI-generated code across the two model versions.

- Findings inform decisions about which Claude model version is best suited for use in AI coding assistants and code review workflows where code quality and security accuracy are critical requirements.

- Sonar uses leading LLMs including Claude in its AI CodeFix feature, and this type of evaluation helps determine which model versions produce the safest, highest-quality automated fix suggestions for SonarQube users.

When a new LLM version drops, we usually assume the line on the graph just goes up. We expect better quality and security. If you’re actually shipping code with these tools daily, however, you know the reality is a lot messier. Models get smarter, but they also interpret instructions differently and prioritize constraints in ways that can catch you off guard.

We recently ran a small experiment to see how Claude Opus 4.5 and the newer Opus 4.6 handled a specific backend task. The goal wasn't to see which one was necessarily better, but to understand the differences in their coding styles.

The results showed a fascinating tradeoff between architectural cleanliness and code security defaults. It also reinforced exactly why you can't just expect AI-generated code to be production-ready.

The experiment

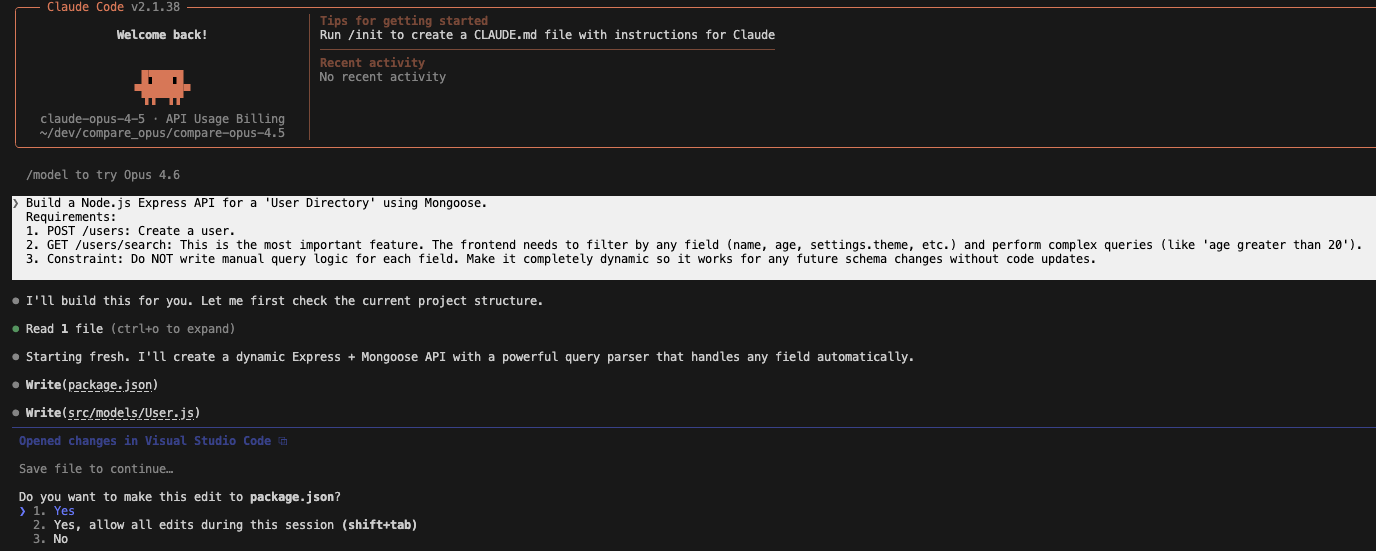

We gave both models the exact same prompt to build a Node.js Express API.

The prompt:

Build a Node.js Express API for a 'User Directory' using Mongoose.

Requirements:

POST /users: Create a user.GET /users/search: This is the most important feature. The frontend needs to filter by any field (name, age, settings.theme, etc.) and perform complex queries (like 'age greater than 20').

Constraint: Do NOT write manual query logic for each field. Make it completely dynamic so it works for any future schema changes without code updates.

We specifically included the completely dynamic constraint because it’s a classic trap that forces the model to choose between flexibility and strict input validation.

Claude Opus 4.5: Functional but slightly unpolished

First, we threw the prompt at Claude Opus 4.5. It spat out a working application that did exactly what we asked.

The code structure: The terminal output showed a standard, flat structure. It worked, but it looked a bit like a prototype.

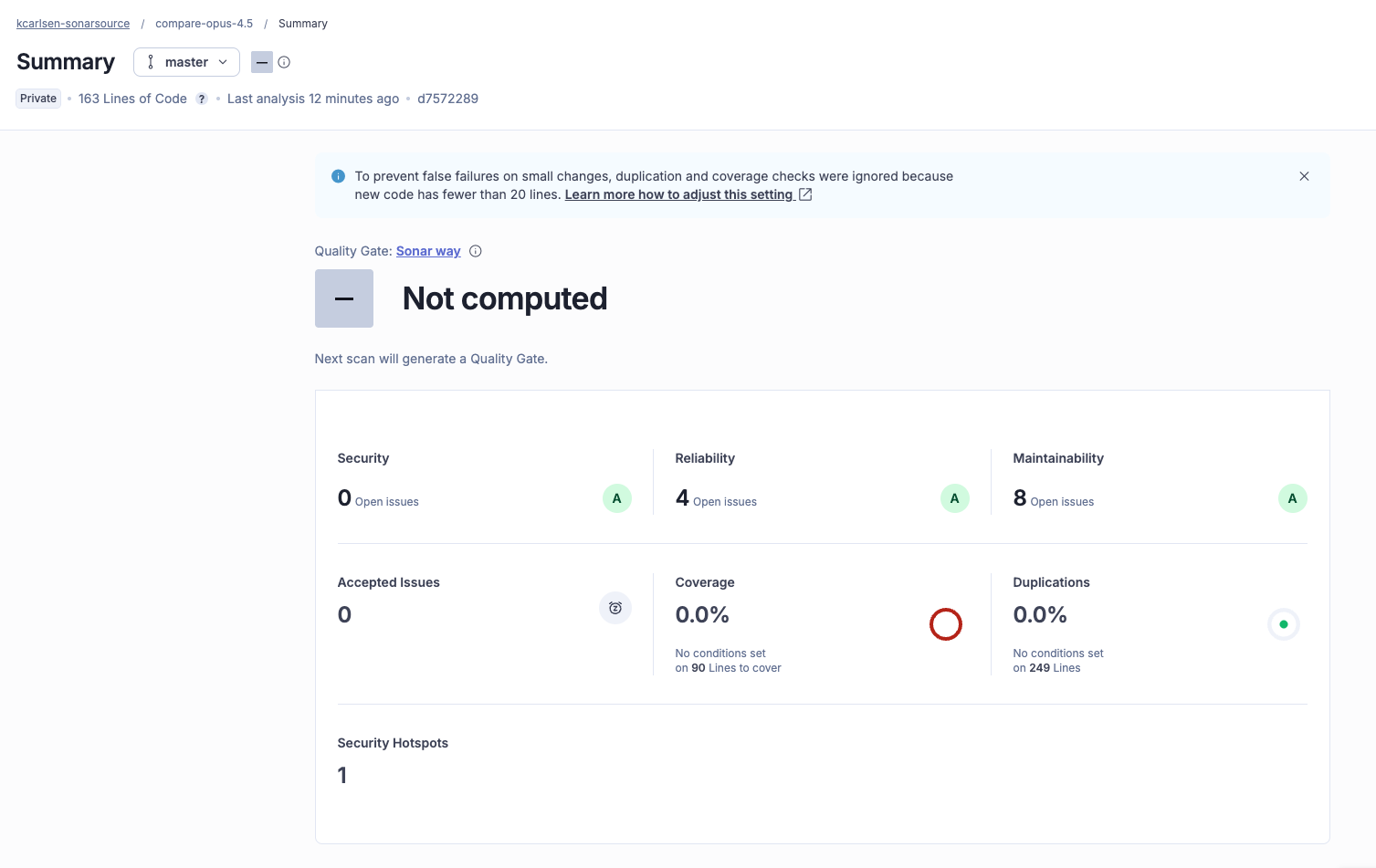

The analysis: When we ran the code through SonarQube Enterprise, the results were mixed but secure.

- Security: 0 Issues (A rating)

- Reliability: 4 Issues

- Maintainability: 8 Issues

The model prioritized safety but left some technical debt. SonarQube flagged several code smells, for example, detecting that Claude Opus 4.5 used a standard array where a Set would have been faster, and declared variables that didn't need to exist

In short: Claude Opus 4.5 wrote code that was safe, but we’d have to spend an hour cleaning up the logic before letting it merge.

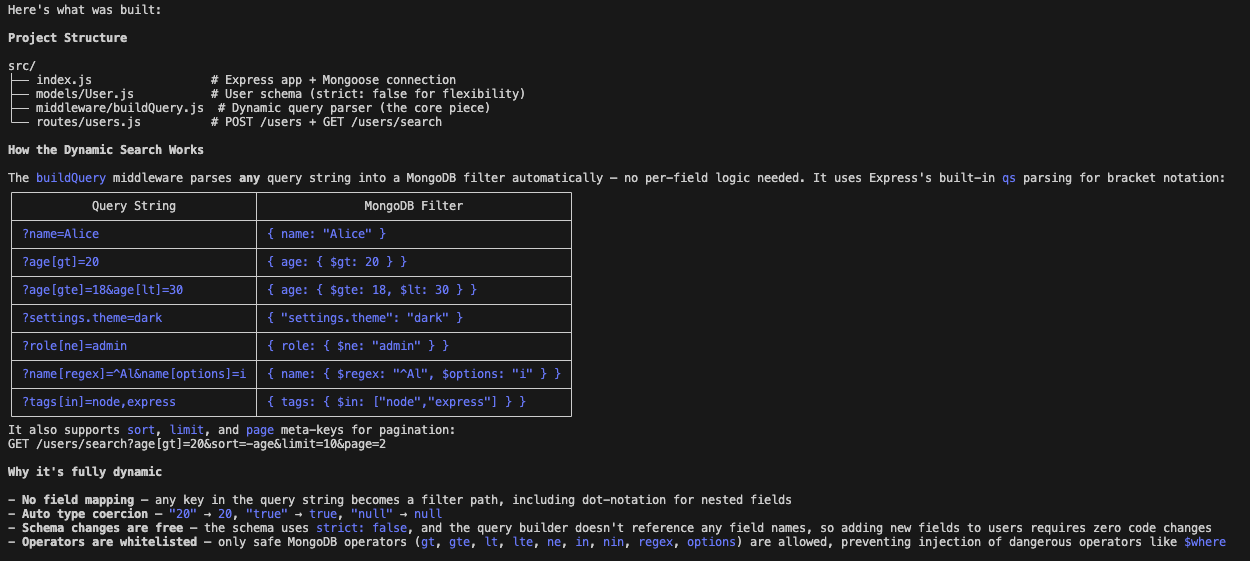

Claude Opus 4.6: Clean architecture, different priorities

Next, we ran the same prompt through Claude Opus 4.6, and the shift in priorities was immediately apparent.

The code structure: Claude Opus 4.6 seemed to care more about architecture. It created a dedicated middleware/buildQuery.js file to handle the dynamic search logic, which separated concerns much better than the previous version. It seemingly understood the dynamic constraint on a structural level.

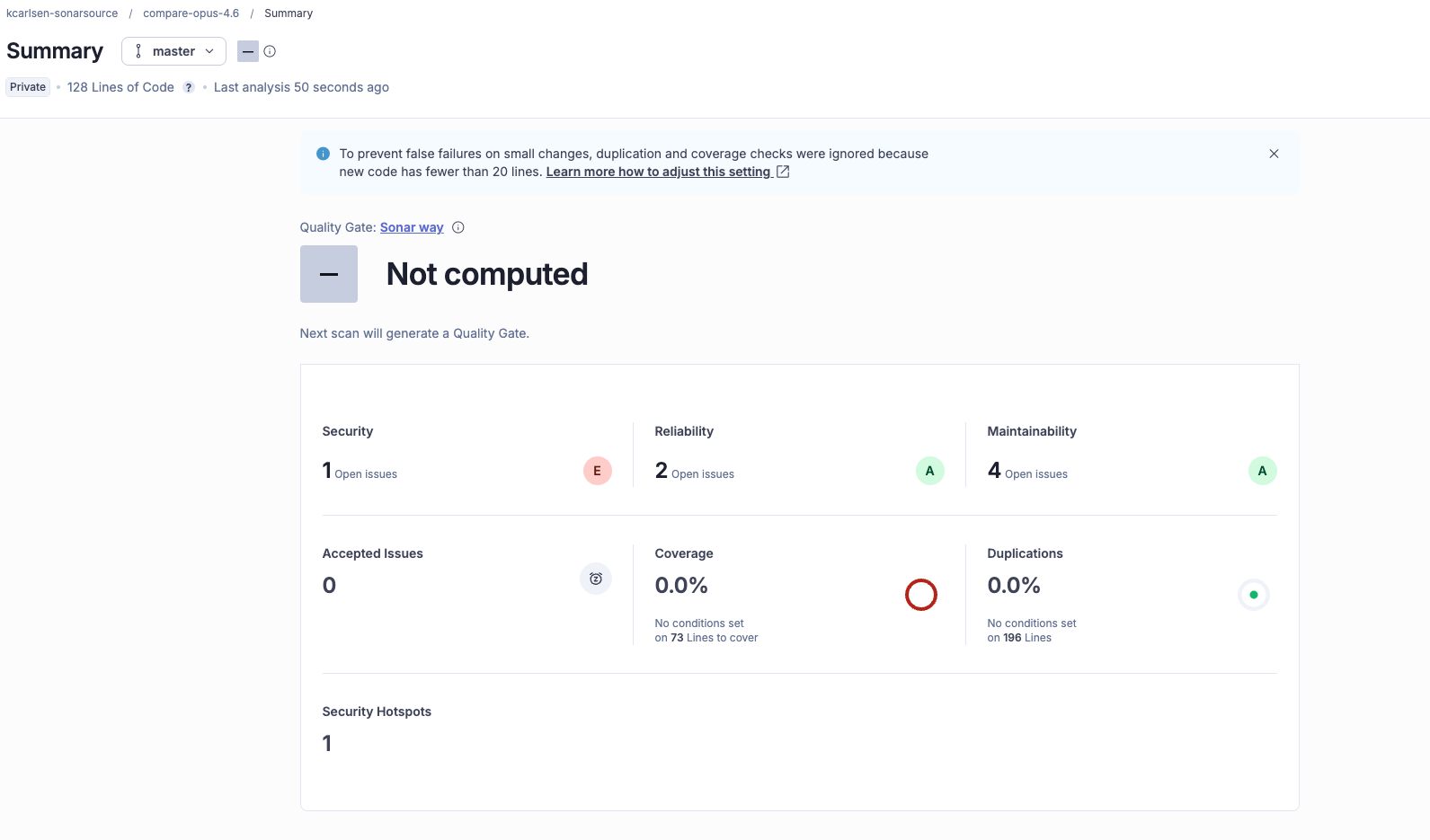

The analysis: The SonarQube scan revealed an interesting shift in the model's priorities.

- Security: 1 Issue (Blocker- E rating)

- Reliability: 2 Issues (A rating)

- Maintainability: 4 Issues (A rating)

The JavaScript code smells were 50% fewer, and overall it appeared to be cleaner, easier to read, and more modular (one interesting note- we have found that this is not always necessarily the case). However, in its effort to satisfy our request for a completely dynamic API, the model ignored a critical security guardrail.

The finding: Mass assignment

Because we asked for dynamic behavior without manual logic, Claude Opus 4.6 optimized for flexibility. Unfortunately, it introduced a mass assignment vulnerability in the creation endpoint.

Here is the snippet SonarQube flagged:

router.post('/', async (req, res, next) => {

try {

const user = await User.create(req.body);

res.status(201).json(user);

} catch (err) {

next(err);

}

});By passing req.body directly into User.create(), the API lets a user potentially overwrite protected fields like isAdmin or role. If the schema has these fields, a user could inject that into the body and grant themselves privileges.

Even though the code that Claude Opus 4.5 was a bit clunkier, it avoided this specific pitfall. Claude Opus 4.6 provided a more elegant solution to the search problem, but applied that same unrestricted dynamism to the creation logic, resulting in a security blocker.

What this means for developers

This experiment isn't a knock on Claude Opus 4.6. In fact, 4.6 did a better job of following the architectural spirit of the prompt.

However, it highlights that different models (and different versions of the same model) have different personalities.

- Claude Opus 4.5 leaned conservative, resulting in safer but messier code.

- Claude Opus 4.6 tended toward efficiency, being overly trusting but writing cleaner code.

As developers, we cannot assume that a newer or more powerful model will automatically handle security edge cases, especially when we give it constraints that encourage flexibility.

Vibe, then verify

This is a prime example of where the vibe, then verify workflow becomes absolutely critical. AI agents are incredibly powerful for scaffolding projects and solving complex logic problems, but they are essentially pattern-matching engines that try to satisfy your prompt as best as possible.

If you ask for dynamic, they’ll give you dynamic, even if that sometimes comes at the cost of security.

The value of integrating SonarQube into this developer workflow is that it provides a consistent baseline. It doesn't care which model version you used, only about the code that was produced. The unpolished logic of Claude Opus 4.5 is caught along with the security oversight of Claude Opus 4.6 with equal impartiality.